A Generative AI Reference Architecture For Platforms And Products

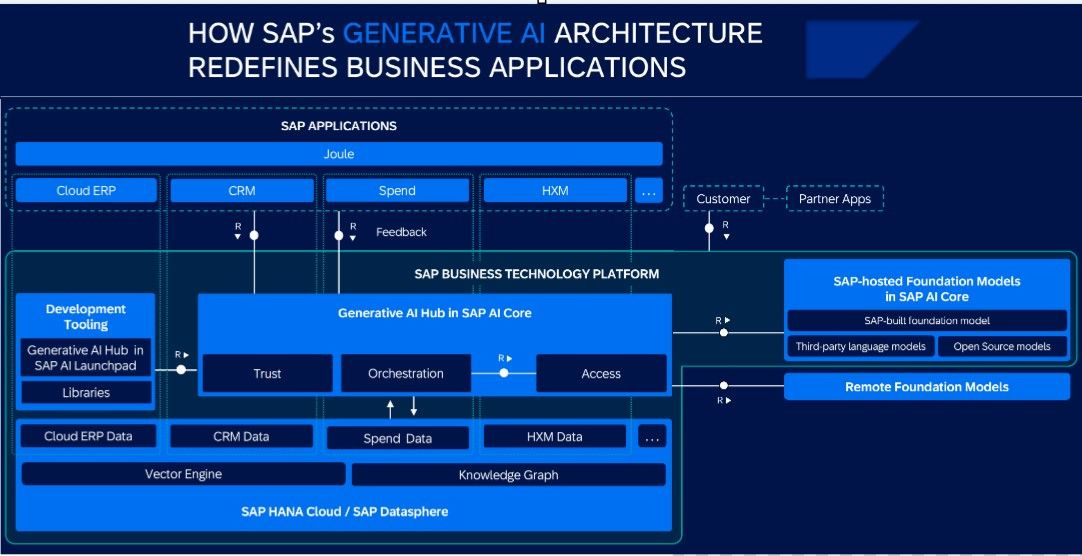

This is a billion-dollar image, but only if you can interpret and synthesize it to your specific business or customer applications. In this article, I’ll break it down into its core design pieces. I’ll generalize it into higher-level frameworks so you can apply it to a range of products and platforms.

You’ll walk away from this article with a better understanding of Generative AI product design and what functional elements LLMs can feasibly support. You’ll also learn to connect design and functionality with monetization.

Generative AI As An Application Access Layer

The common thinking is that LLMs provide access to their internal knowledge graphs. LLMs assemble those knowledge graphs by training on massive amounts of data. We’ve seen the shortcomings of that productization approach over the last year.

Assembling enough data to build an “everything to everyone” knowledge graph isn’t feasible. The LLM’s reliability will always fail to meet some users’ needs due to gaps in the data and unexpected ways that users interact with the LLM. The access layer in this image describes a way to leverage LLMs that aligns with their strengths and avoids reliability weaknesses.

Joule is an LLM user interface. In the image, the layer beneath Joule is SAP’s suite of enterprise apps. Copilot is another Generative AI user interface integrated into Microsoft’s application stack following the same design paradigm. Bard and ChatGPT are working towards this objective but don’t have all the pieces built yet.

Take Joule away, and you’ll see the old workflow. Users selected the app they needed to do their job, and completing workflows often involved bouncing between apps. Joule changes that workflow and takes over as a single interface to all SAP’s apps. This design pattern takes users from workflows by apps to workflows by tasks and, soon, workflow by outcome.

Users go to Joule and tell it what task they need to do or the outcome they want. Joule is the interface between the user and the app or apps required to complete that task. It can ask the user for additional information or documents. It can orchestrate approval requests or send emails.

The LLM relies on its internal knowledge graph to understand the user’s request, classify the workflow, and route it to the appropriate applications. It converts application responses into a human-readable format that enables collaborative task execution. It reads documents the user provides and extracts information the apps request or answers user questions about documents.